Raw Audio VAE

Sound Design Strategies for Latent Audio Space Explorations Using Deep Learning Architectures

Description

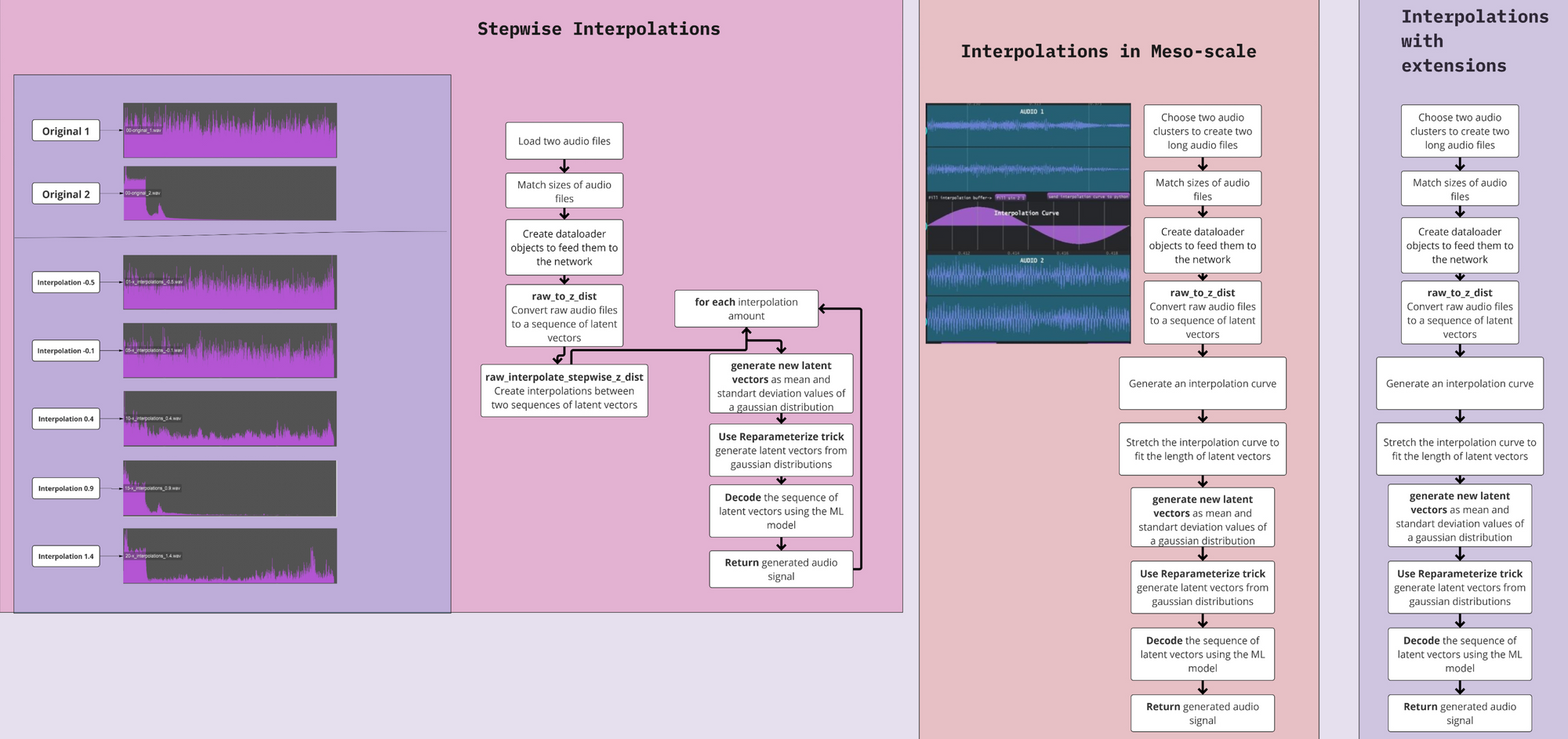

The research in Deep Learning applications in sound and music computing have gathered an interest in the recent years; however, there is still a missing link between these new technologies and on how they can be incorporated into real-world artistic practices. In this work, we explore a well-known Deep Learning architecture called Variational Autoencoders (VAEs). These architectures have been used in many areas for generating latent spaces where data points are organized so that similar data points locate closer to each other. Previously, VAEs have been used for generating latent timbre spaces or latent spaces of symbolic muic excepts. Applying VAE to audio features of timbre reuires a vocoder to transform the timbre generated by the network to an audio signal, which is computationally expensive. In this work

Architecture

Examples

Two different datasets were employed for training, named improvisation dataset and sonification dataset. The improvisation dataset consists of pose sequences and audio that have been recorded while a dancer was freely improvising to a given music. The dancer is an expert with a specialisation in contemporary dance and improvisation. The music consists of short excerpts of royalty free music including experimental electronic music, free jazz, and contemporary classic. The pose sequences have been acquired using the markerless motion capture system (The Captury ) in the iLab at MotionBank, University for Applied Research, Mainz. The recording is 10 minutes in length which corresponds to a sequence of 30000 poses. Each pose consists of 29 joints whose relative orientations are represented by quaternions.

The sonification dataset contains the same pose sequences as the improvisation dataset. The audio of this dataset was created afterwards, through sonification, employing a very simple sound synthesis consisting of two sine oscillators controlled by the dancer’s hands. The frequency and amplitude of each oscillator are proportional to the height and velocity of the corresponding hand, respectively. The authors created this dataset to verify the performance of RAMFEM.

Resources

Code

https://github.com/ktatar/rawaudiovae

Artworks

The artwork titled Coding the Latent by Kıvanç Tatar uses three interpolation strategies presented in this paper, within a live coding environment, performed at Kubus, ZKM, Karlsruhe.

Acknowledgements

This work was partially supported by the Wallenberg AI, Autonomous Systems and Software Program – Humanities and Society (WASP-HS) funded by the Marianne and Marcus Wallenberg Foundation and the Marcus and Amalia Wallenberg Foundation. Additionally, this research was previously supported by the Swiss National Science Foundation, and Canada Council for the Arts.